“We store dates in two digits because we have ten fingers. We are still paying for that decision.”

The First Design Decision

Before any human built a computing device, the human body decided the number base. Humans have 10 fingers. Every culture that developed a counting system independently — Sumerian, Egyptian, Greek, Roman, Chinese, Indian, Mayan, West African — converged on base-10 or a variant of it because the fingers were the first counting device. When you count on your fingers and run out, you've reached 10. You put down a marker and start again. That marker is a tens column.

This was not a decision. It was a constraint that became a convention that became a civilization-wide standard that became the invisible assumption embedded in every date, every timestamp, every price, and every measurement system in human history.

Why Base-10 Is Wrong

Base-10 is anatomically natural and mathematically awkward. The number 10 has only four divisors: 1, 2, 5, and 10. You cannot divide 10 evenly by 3, 4, 6, 7, 8, or 9.

Base-12 has six divisors: 1, 2, 3, 4, 6, and 12. This is why time is base-12 (12 hours, 60 minutes — 60 = 5 × 12), why the imperial measurement system is partly duodecimal (12 inches in a foot), and why the Babylonians used base-60 for astronomy (60 divides cleanly by 2, 3, 4, 5, 6, 10, 12, 15, 20, 30). The reason we have 360 degrees in a circle is that the Babylonians, who had a much better number base than we do, noticed that a circle of radius 1 has a circumference of approximately 6 — and 6 × 60 = 360, so they made that the unit count.

We inherited base-10 from our fingers. We inherited base-60 from the Babylonians. We forgot to unify them. Every unit conversion problem in the history of engineering traces to this unresolved inheritance.

The Computer Mismatch

Computers use base-2 — binary — because transistors have two stable states: on and off. This is not a choice; it is physics. Every number a computer works with internally is binary.

The interface between human base-10 and computer base-2 creates a class of errors with no solution, only mitigations:

- Floating-point imprecision: 0.1 + 0.2 ≠ 0.3 in IEEE 754 because 0.1 cannot be exactly represented in binary. This is not a bug. It is the mathematical consequence of the base mismatch between our fingers and our transistors.

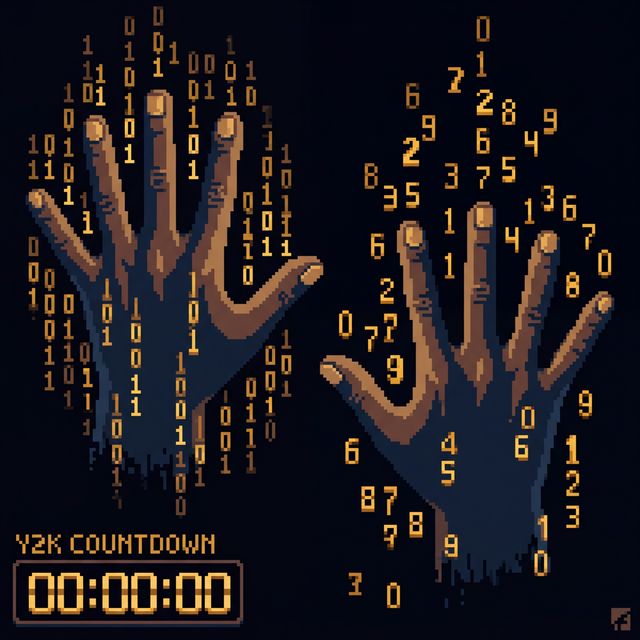

- The Y2K problem: "99" was chosen to represent 1999 because storage was expensive and developers were certain the system would be replaced before the year 2000. The choice to store years in two digits was a consequence of base-10 aligned with human date perception. Two base-10 digits = 100 possible years. Enough for now. Catastrophic later.

- The Year 2038 Problem: Unix timestamps count seconds from January 1, 1970 in a 32-bit signed integer. A 32-bit signed integer maxes out at 2,147,483,647. Add that many seconds to January 1, 1970 and you get 03:14:07 UTC on January 19, 2038, when every affected system rolls over to a large negative number representing sometime in 1901. This is Y2K for 32-bit systems. It is coming.

The Exhibit

Every date-boundary bug in computing history shares a common ancestor: a human has 10 fingers, civilization adopted base-10, and the storage optimization for representing human-scale dates in computer memory was always insufficient for the full range of dates that systems would eventually need to handle.

The Mars Climate Orbiter crashed because one system used metric units and another used imperial — a unit mismatch. The deeper cause: two incompatible unit systems coexist because different civilizations made different base choices based on what was convenient for their anatomy and trade goods.

We have ten fingers. We built a civilization around that. We built computers that don't. Every year-boundary bug, every floating-point surprise, every unit conversion failure is the conversation between those two facts, unresolved.