“There was no choice but to be pioneers.”

The Story

On July 20, 1969, three minutes before the Apollo 11 lunar module touched down on the Moon, the onboard computer began flashing 1202 and 1201 alarms. The alarms meant the computer was overloaded — a rendezvous radar switch had been left in the wrong position, flooding the processor with unnecessary data.

Mission Control almost called an abort.

But the software held. Margaret Hamilton's team at MIT's Instrumentation Laboratory had designed the flight software with an asynchronous priority-based architecture. When the computer detected it couldn't complete all tasks, it didn't crash. It didn't freeze. It dropped the lower-priority tasks and kept running the ones that mattered — the ones that would land the spacecraft safely. The landing continued. Humans walked on the Moon.

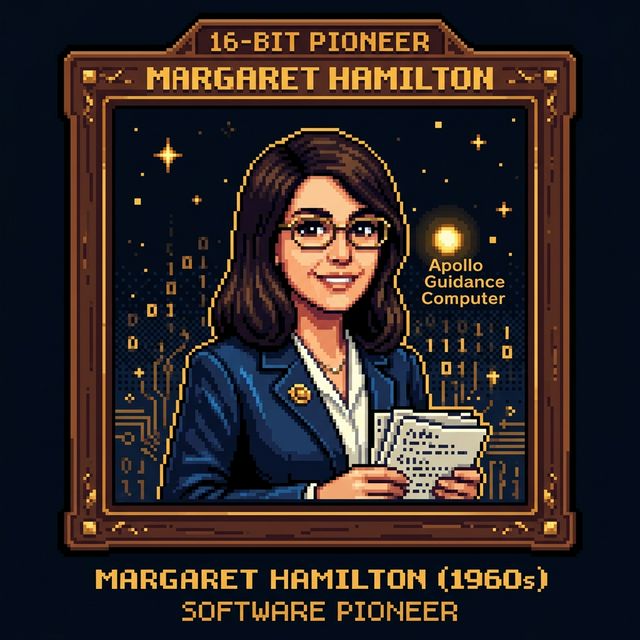

Hamilton had led the development of the onboard flight software for both the Command Module and the Lunar Module. She was 31 years old. Her team wrote the code in assembly language. There was no version control, no CI/CD, no stack overflow to search. The software had to work the first time, in an environment where a bug could kill three astronauts live on television.

She insisted on building in error detection and recovery at a time when many engineers considered software a secondary concern to hardware. When she raised the possibility that an astronaut might accidentally select a wrong program during flight, she was told it would never happen. She added the error-checking code anyway. On Apollo 8, astronaut Jim Lovell accidentally selected P01 during flight — exactly the scenario she'd warned about. Her safeguard prevented a catastrophic navigation error.

Hamilton coined the term "software engineering" deliberately, to argue that building software deserved the same rigor and respect as building bridges. At the time, the term was considered amusing — even her colleagues at MIT thought it was overreach. Nobody's laughing now.

Why They're in the Hall

Hamilton is Pioneer and Builder, and her work anticipates nearly every resilience pattern documented in TechnicalDepth.

Priority-based task shedding under load? That's modern circuit breakers and bulkheads, implemented in 1960s assembly language on a computer with 74KB of memory. Defensive programming against operator error? That's input validation, decades before the term existed. Insisting on error recovery when management says "that scenario will never happen"? That's every post-mortem ever written.

Her connection to the observability domain is direct: the 1202 alarms were, in effect, the first production alerting system that actually told operators what was happening in time to act on it. The computer didn't silently fail. It raised an alarm, shed load, and kept going. Most modern systems still can't do this as gracefully.

The term she coined — software engineering — is the frame through which TechnicalDepth operates. The premise that software is engineered, not just written, that it has architecture, failure modes, and design trade-offs worth studying — that premise starts with Hamilton.